It’s not DNS: Ensuring high availability in a hybrid cloud environment

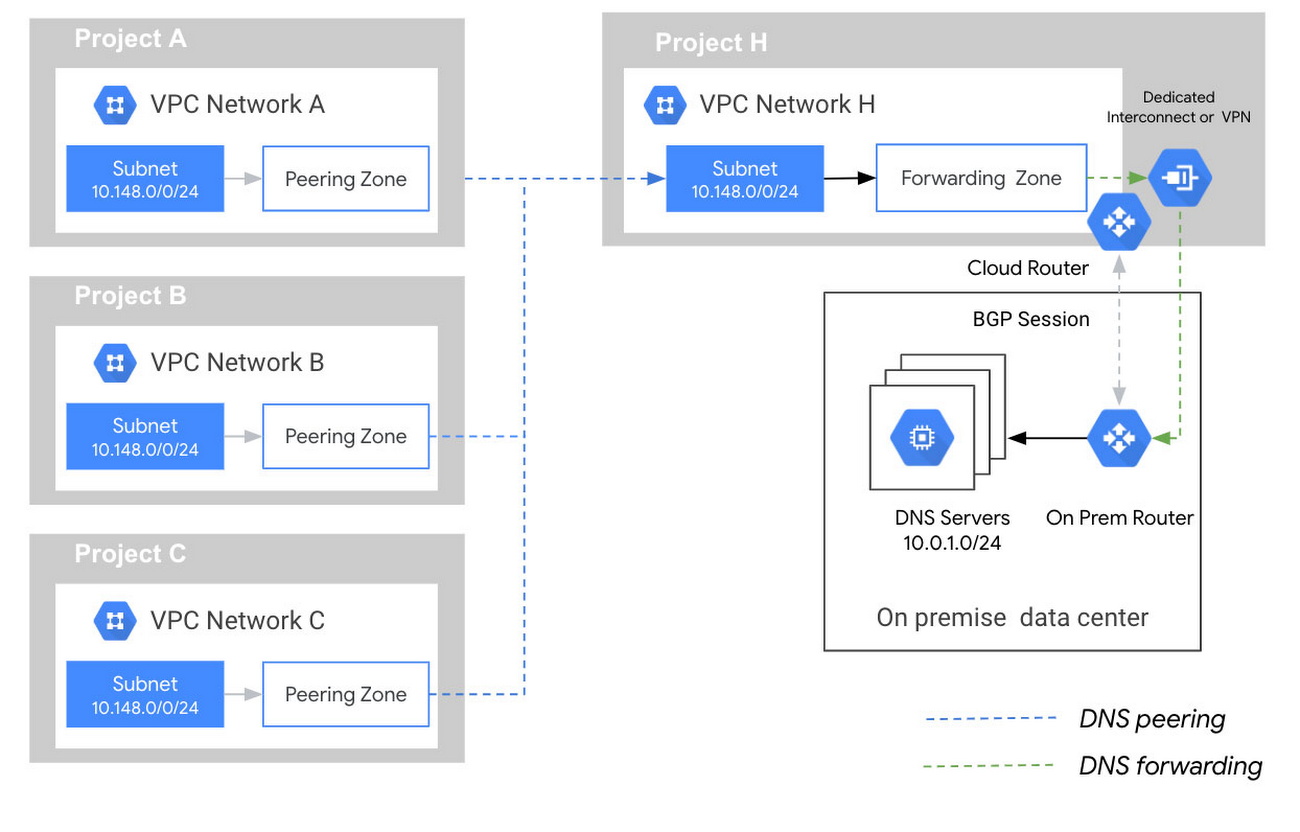

Our customers have multi-faceted requirements around DNS forwarding, especially if they have multiple VPCs that connect to multiple on-prem locations. As we discussed in an earlier blog post, we recommend that customers utilize a hub-and-spoke model, which helps get around reverse routing challenges due to the usage of the Google DNS proxy range.

But in some configurations, this approach can introduce a single point of failure (SPOF) within the hub network, and if there are connectivity issues within your deployment, it could cause an outage in all your VPC networks. In this post, we’ll discuss some redundancy mechanisms you can employ to ensure that Cloud DNS is always available to handle your DNS requests.

Adding redundancy to the hub-and-spoke model

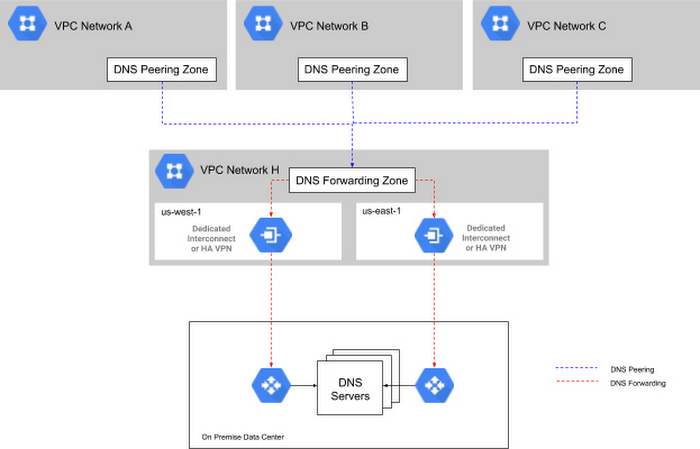

If you need a redundant hub-and-spoke model, consider a model where the DNS-forwarding VPC network spans multiple Google Cloud regions, and where each region has a separate path (via interconnect or other means) to the on-prem network. In the image below, VPC Network H spans us-west1 and us-east1 and each region has a dedicated Interconnect to the customer’s on-prem network. The other VPC networks are then peered with the hub network.

This scenario provides highly available DNS capabilities, allowing the VPC to egress queries out of either interconnect path, and allowing return queries to return via either interconnect path. The outbound request path always leaves Google Cloud via the nearest interconnect location to where the request originated (unless a failure occurred, at which point it uses the other interconnect path).

Note, while Cloud DNS will always route the request back to on-prem through the interconnect closest to the region, the responses back from the on-prem network to Google Cloud will depend on your WAN routing. In cases with equal cost routing in place, you may see asymmetric routing behaviors on the return responses, which take a different path than the way they went, and may introduce additional resolution latencies in some cases.

Alternative DNS setups

A highly available hub-and-spoke model isn’t an option for all companies, though.

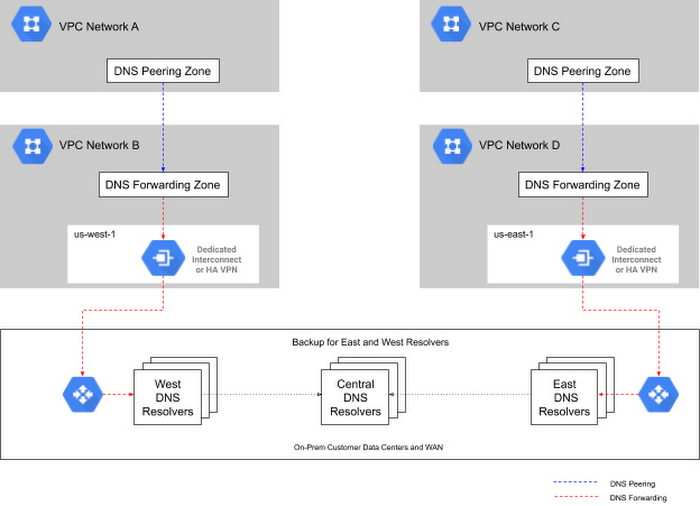

Some organizations’ IP address space consists of a mixture of address blocks across many locations. This often happens to companies as a result of a merger or acquisition, which can make it difficult to set up a clean geo-based DNS. Let’s look at a different DNS setup and how customers may have to adapt for failures of the DNS stack.

To understand the problem, consider the case of a Google Cloud customer that was managing U.S. East Coast DNS resolvers for East Coast-based VPCs, and U.S. West Coast resolvers for West Coast-based VPCs, in order to reduce latency for DNS queries. The challenge arose when it came time to build out redundancy. Specifically the customer wanted a third set of resolvers to provide backup for both east and west coast resolvers in the event of a failure of either of the resolvers.

Unfortunately, a setup like Figure 1.3 could cause issues in a failure scenario.

In this setup, the failure of the West Coast DNS resolvers would result in traffic being forwarded to the backup servers running in the central US, with the source IP addresses for these DNS requests corresponding to Google Cloud’s DNS proxy server address range (35.199.192.0/19). But because there are two VPCs and the WAN sees two different routes to get back to the Google Cloud DNS proxy server address range, it would typically route the return requests back via the closest link advertising the Google Cloud DNS proxy IP range. In this case, that would be the east coast interconnect. And because the east coast interconnect connected to a different VPC than originated the request, the response would be dropped by the Google Cloud DNS proxies (since the Virtual Network ID (VNID) of the return packets would be different from the VNID for the east coast VPC). The problem herein lies with the routing and subnet advertisements, not the DNS layer itself.

So the question becomes, how do you support network topologies with multiple VPCs and DNS resolvers while still providing HA DNS resolvers on-premise?

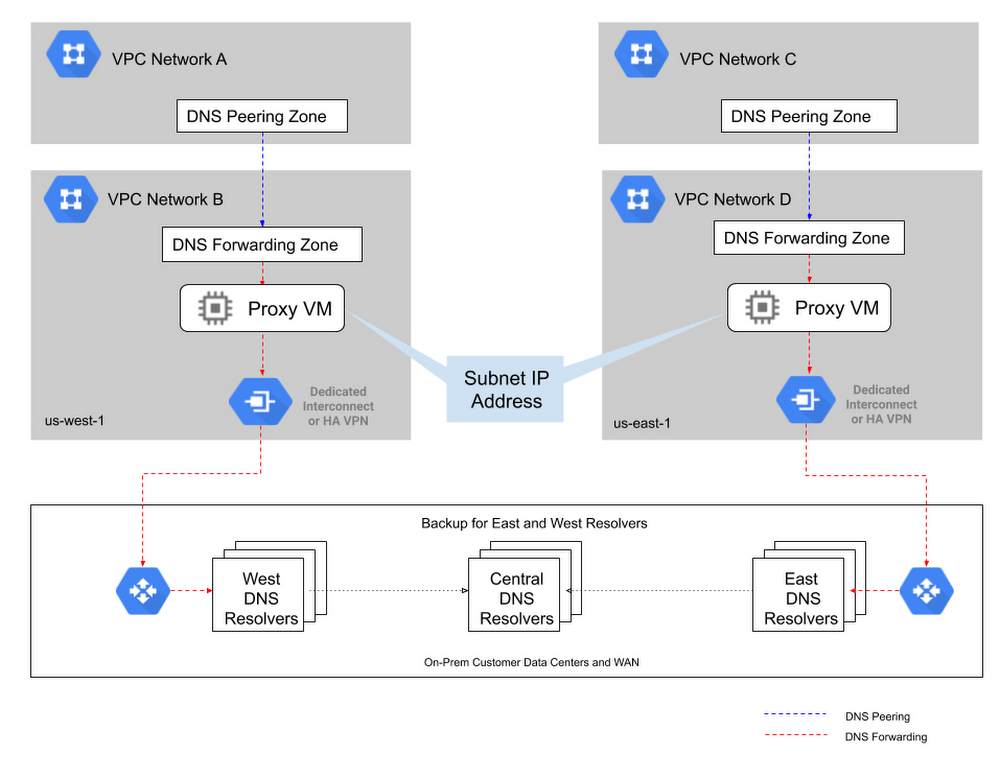

One approach is to proxy the DNS request as shown in Figure 1.4 below. By forwarding all DNS requests to a proxy setup within the VPC (or even within a specific subnet, depending on your desired granularity), you end up with VPC-specific IP addresses making it easy for the on-prem infrastructure to correctly send their responses back to the correct VPC. This also simplifies on-prem firewall configurations because you no longer need to open them up for Google’s DNS proxy IP range. Since you can specify multiple IP addresses for DNS forwarding, you can run multiple proxy VMs for additional proxy redundancy and further bolster your availability.

Highly available DNS: the devil is in the details

DNS is a critical capability for any enterprise, but setting up highly available DNS architectures can be complex. It’s easy to build a highly redundant DNS stack that can handle many failure scenarios, but overlook the underlying routing until something fails and DNS queries are unable to resolve. When designing a DNS architecture for a hybrid environment, be sure to take a deep look at your underlying infrastructure, and think through how failure scenarios will impact DNS query resolution.

Comments

Post a Comment