Durability - how well your data is protected from loss or corruption - is a critical feature of any data storage service. We often receive questions about how to design workloads for durability and what specific durability assumptions a workload can make about Persistent Disk, Google Cloud’s reliable, high-performance block storage for Compute Engine virtual machine instances. To help, we recently published details about how we design Persistent Disk for extraordinary durability.

When we say “designed for”, disk durability represents the probability of data loss, by design, for a typical disk in a typical year using a set of assumptions about:

Hardware failuresThe likelihood of catastrophic eventsIsolation practices and engineering processes in Google data centersThe internal encodings used by each disk type

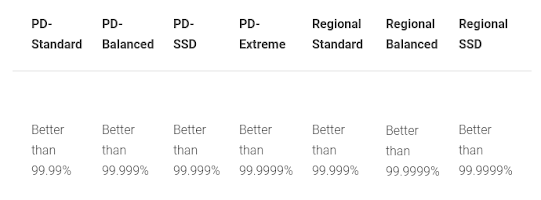

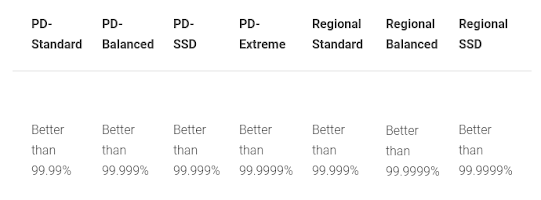

Specifically, here are all the Persistent Disk types we offer:

To understand what these numbers mean, 99.999% durability means that with 1,000 disks, you would likely go a hundred years without losing a single one. Let’s provide some background on how we accomplish this, and how you can achieve ultra-high durability.

How Google Cloud designs for durability

Persistent Disk manages physical disks and data distribution for you to ensure redundancy and optimal performance. Persistent Disk durability is always the same as or higher than three-way replication—each Persistent Disk byte is stored in three or more locations distributed across separate fault domains within a given Compute Engine zone.

Because of this, data loss events are extremely rare and are usually the result of coordinated hardware failures, software bugs or a combination of the two. Our internal goal is zero data loss events per quarter.

Persistent Disks have built-in redundancy to protect your data against equipment failure and to ensure data availability through datacenter maintenance events. Checksums are calculated for all Persistent Disk operations, so we can ensure that what you read is what you wrote. Google also takes many steps to mitigate the industry-wide risk of silent data corruption.

Every storage device in a Google datacenter is continuously monitored, both for errors returned during operations and through internal diagnostics. Data on devices that fail is re-replicated within minutes, and devices that are predicted to fail are safely drained and swapped.

Security

In addition to your data being stored durably, it is always encrypted in transit and at rest—there are no options or potential misconfigurations that can result in data being stored unencrypted. You also have the option to use CSEK or CMEK to encrypt the disk encryption key. Persistent Disk infrastructure performs automatic maintenance and replication without access to customer encryption keys. For a more detailed look at how we tackle physical security, data access, data disposal, and access logging, please see whitepapers published at https://cloud.google.com/security/.

Regional disks and snapshots

As an option for even higher durability, Regional Persistent Disks synchronously replicate data across two zones, providing protection if a zone becomes unavailable, even for an extended period of time. With this capability, a workload can fail over to a healthy zone and operate on intact data, experiencing no data loss. Taking advantage of this durability across zones, a secondary replica can be used to restore availability in a second zone in < 1 minute.

Taking periodic snapshots of disks provides yet another layer of protection. Snapshots provide a backup copy of the data on your disk that is stored internally in Cloud Storage, providing even higher durability and recovery capabilities for disks. Snapshots also have the advantage of enabling recovery to earlier disk states that were backed up in the past. We recommend scheduling regular snapshots of any disk with important data.

All Persistent Disk types provide extraordinary durability in addition to the price / performance you expect. To get started, check out more details about disk options.

David Seidman

Product Manager

Comments

Post a Comment